请注意,本文编写于 783 天前,最后修改于 783 天前,其中某些信息可能已经过时。

目录

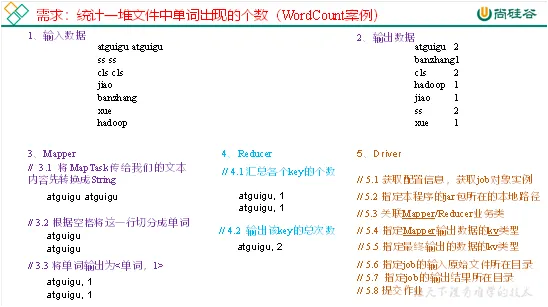

WordCount案例实操

一、需求

- 在给定的文本文件中统计输出每一个单词出现的总次数

- 输入数据:hello.txt文本文件

- 期望输出:

atguigu 2

banzhang 1

cls 2

hadoop 1

jiao 1

ss 2

xue 1

二、需求分析

按照MapReduce编程规范,分别编写Mapper,Reducer,Driver。

三、准备

(1) 创建maven工程

(2) 在pom.xml中添加依赖

xml<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.30</version>

</dependency>

</dependencies>

(3) 在项目的src/main/resources目录下,新建一个文件,命名为“log4j.properties”,在文件中填入。

propertieslog4j.rootLogger=INFO, stdout log4j.appender.stdout=org.apache.log4j.ConsoleAppender log4j.appender.stdout.layout=org.apache.log4j.PatternLayout log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n log4j.appender.logfile=org.apache.log4j.FileAppender log4j.appender.logfile.File=target/spring.log log4j.appender.logfile.layout=org.apache.log4j.PatternLayout log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

(4) 创建包名 com.example.mapreduce.wordcount

四、编写程序

1.编写mapper类

javapackage org.example.mapreduce.wordcount;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class WordCountMapper extends Mapper<LongWritable, Text,Text, IntWritable> {

private Text outKey = new Text();

private IntWritable outValue = new IntWritable(1);

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, IntWritable>.Context context) throws IOException, InterruptedException {

//1.读取每一行

String line = value.toString();

//2.分割出单词

String[] words = line.split(" ");

//3.生成key

for (String word : words) {

outKey.set(word);

//4.写入context

context.write(outKey, outValue);

}

}

}

2.编写Reducer类

javapackage org.example.mapreduce.wordcount;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WordCountReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

IntWritable outValue = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Reducer<Text, IntWritable, Text, IntWritable>.Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable value : values) {

sum += value.get();

}

outValue.set(sum);

context.write(key,outValue);

}

}

3.编写Driver类

javapackage org.example.mapreduce.wordcount;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.FileInputStream;

import java.io.IOException;

public class WordCountDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

//1.获取Job对象连接

Configuration config = new Configuration();

Job job = Job.getInstance(config);

//2.设置jar包路径

job.setJarByClass(WordCountDriver.class);

//3.关联mapper和reducer

job.setMapperClass(WordCountMapper.class);

job.setReducerClass(WordCountReducer.class);

//4.设置mapper输出kv类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//5.设置最终输出kv类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

//6.输入输出路径

FileInputFormat.setInputPaths(job, new Path("E:\\zhuanye\\大数据相关文件\\hadoop\\资料\\11_input\\inputword"));

FileOutputFormat.setOutputPath(job, new Path("E:\\zhuanye\\大数据相关文件\\hadoop\\资料\\11_input\\outputwordcount\\output1"));

//7.提交job

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}

本文作者:苏皓明

本文链接:

版权声明:本博客所有文章除特别声明外,均采用 BY-NC-SA 许可协议。转载请注明出处!

目录